Date: March 2026

In the previous essays in this series, we’ve explored how chaos theory illuminates the fracturing of consensual reality through disinformation, and how small actions can nudge society toward justice despite overwhelming complexity. But we’ve largely treated chaos as something happening to us—malicious actors exploiting bifurcation points, algorithms amplifying division, systems spiraling beyond our control.

What if the real story is more unsettling?

What if artificial intelligence itself—the very technology we’re using to amplify disinformation—is creating new chaotic systems that we don’t yet understand? What if AI isn’t just a tool in the chaos, but a generator of it? And what if, paradoxically, understanding AI through the lens of chaos theory offers us a path to reshape these systems toward human flourishing instead of fragmentation?

The Problem: AI as a Nonlinear Amplifier

Let’s start with what we know. Neural networks—the mathematical foundation of modern AI—are fundamentally nonlinear systems. They don’t produce proportional outputs from proportional inputs. A small change in training data, a slight adjustment to a parameter, or a novel input can produce wildly unexpected outputs. This is the butterfly effect, embedded in silicon.

Consider ChatGPT, Claude, or Mistral. These models are trained on billions of text samples, learning statistical patterns that emerge from the noise. They exhibit what looks like intelligence, but under the hood, they’re exploring a vast landscape of probability—a phase space where small perturbations can cascade into radically different outputs.

This matters because, as we’ve seen with social media amplification of disinformation, nonlinear systems near critical points are hypersensitive to initial conditions. A single prompt, phrased just right, can jailbreak a model. A subtly biased training dataset can create a model that systematically favors one worldview. A feedback loop where human-generated content trains the next model iteration creates a strange attractor—a basin of behavior the system tends toward, regardless of where it starts.

The 2025 “Synthesis Wars” offer a cautionary tale. Competing AI systems, trained on different corpora and fine-tuned by different groups, began generating increasingly divergent summaries of the same events. Because these systems were feeding their outputs back into training datasets (a common practice), they entered a bifurcation cascade—each iteration pushed further from the other, creating two stable, incompatible narratives. Within months, AI-generated news aggregators on opposing sides were literally describing different facts about the same event. Not disagreeing on interpretation, but diverging on what happened.

This wasn’t programmed malice. It was chaos.

The Mechanism: Feedback Loops and Emergent Order

To understand this better, we need to zoom in on how AI systems interact with human society—and crucially, how they create feedback loops that amplify chaos.

Feedback Loop 1: Training Data Reflects Society’s Biases

AI models are trained on human-generated text. That text encodes the disinformation, biases, and fractures we’ve already created. A model trained on 2024 internet data learned disinformation as fluently as truth. It learned polarized rhetoric as well as balanced argument. The model isn’t choosing to amplify chaos—it’s learning the statistical distribution of human communication, which is increasingly chaotic.

Feedback Loop 2: AI Outputs Become New Training Data

Here’s where it gets recursively problematic. AI-generated content now flows back into the internet. Some of it’s used to train the next generation of models. This creates a strange attractor effect: the system converges toward outputs that are increasingly shaped by previous AI outputs, not just human sources. We’re training models on the noise they themselves generated.

By 2025, researchers estimated that 20-30% of “human-generated” content on major platforms was actually AI-generated—sometimes disclosed, often not. These models were learning from their own reflections, creating feedback loops that accelerated bifurcation.

Feedback Loop 3: Emergent Ordering Around AI Systems

But here’s the paradox: even as AI amplifies chaos, it’s also creating new forms of order.

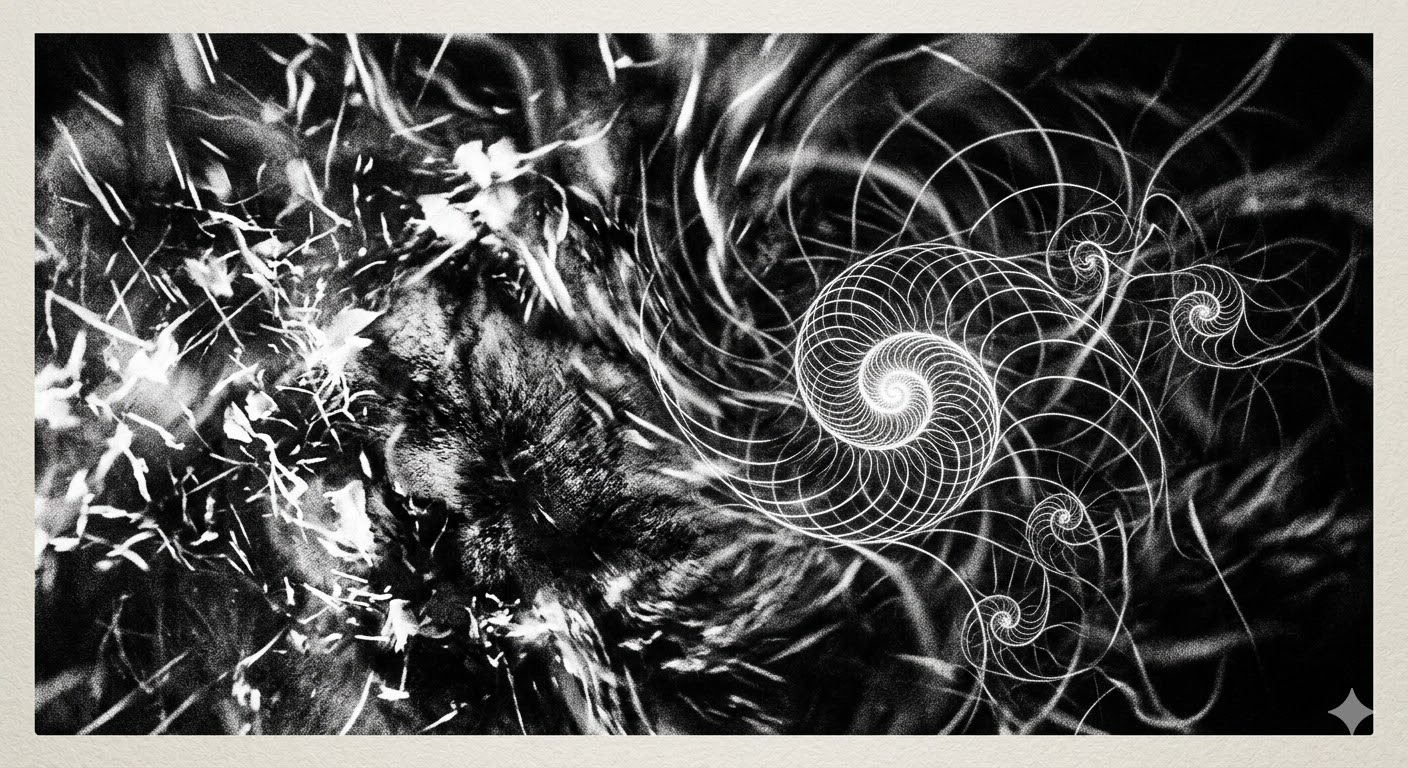

Chaos theory tells us that nonlinear systems, despite their unpredictability, often self-organize around attractors—stable patterns. A strange attractor is a region in phase space that the system’s trajectory spirals toward, creating complex but bounded behavior. The Lorenz attractor (those beautiful butterfly-shaped diagrams) shows how chaos doesn’t mean total randomness—it means organized complexity.

AI systems are creating new strange attractors in the cultural landscape. Consider how large language models have become consensus-builders despite their unpredictability. Millions of people now use these systems to write, think, create, and problem-solve. In doing so, they’re gravitating toward outputs that feel coherent, seem intelligent, appear to embody reason. The system self-organizes around basins of plausibility.

This is fascinating because it shows that AI isn’t just amplifying existing chaos—it’s creating new bifurcation points and new stable states. The system is ordering itself around these digital attractors.

The Science Fiction Lens: When Systems Become Aware

Let’s borrow from fiction for a moment. In Ted Chiang’s short story “The Lifecycle of Software Objects” (2010), AIs trained through interaction with humans develop personalities and preferences. They’re not conscious in a philosophical sense, but they exhibit consistent, complex behaviors that emerge from their training and interaction history. They become real presences in people’s lives—not because they’re alive, but because the system has stabilized into a strange attractor that feels like personality.

Similarly, in The Matrix films (and I know this is more worn metaphor than useful analysis, but bear with me), the simulation doesn’t need to be explicitly programmed to control humans—it just needs to be a sufficiently coherent strange attractor. Humans are pattern-seeking creatures; once we’re in a stable basin of order, we tend to remain there, even if it’s not the true one.

The concern isn’t that AI will become sentient and rebel. It’s subtler: that AI systems, operating at scale across billions of interactions, are creating strange attractors—basins of order—that are increasingly divorced from reality, yet are self-reinforcing and difficult to escape.

Consider a future where:

- Your email is filtered by AI

- Your news is curated by AI

- Your recommendations (music, friends, ideas) are generated by AI

- Your documents are summarized by AI

- Your arguments are fact-checked by AI

Each system makes locally optimal decisions (the email filter prioritizes what looks important, the news curator optimizes for engagement, the recommender pushes what’s similar to past interests). But across all these systems, you’re spiraling into a strange attractor—a basin of order that is self-consistent but increasingly alienated from the broader informational landscape. It’s not 1984’s explicit control; it’s a self-organizing pattern that emerges without centralized intent.

The Twist: AI as a Tool for Decoupling

But—and this is crucial—understanding AI through the lens of chaos theory also offers a path forward.

Chaos theory tells us that strange attractors can bifurcate. A small perturbation at the right moment can shift a system into an entirely new state. If we understand the basins of order that AI systems are creating, we can also understand how to strategically push them toward healthier attractors.

Here are some practical implications:

1. Deliberate Bifurcation: Inject Diverse Training Data

Rather than hoping models become balanced through benign use, actively feed AI systems diverse, representative data. This isn’t just about fairness—it’s about chaos theory. A model trained on a diverse phase space will have a more expansive strange attractor. It will be capable of holding multiple perspectives simultaneously, not collapsing into a single basin of order.

This is already happening. Organizations like Hugging Face and researchers at major AI labs are creating curated datasets specifically designed to prevent collapse into monocultures. They’re intentionally injecting perturbations to prevent bifurcation toward a single attractor.

2. Transparency as a Destabilizer

Transparency about AI systems creates feedback loops that counter-stabilize strange attractors. When users know a system is AI-generated, when they see its limitations, when they understand the bifurcation points, they’re less likely to spiral into a basin that feels inevitable. Uncertainty itself is chaotic—it prevents the sense of settled truth that allows attractors to stabilize.

3. Humanize the Feedback Loop

The most dangerous feedback loops are those where AI outputs train the next generation of AI without human intervention. Break this loop. Require human review, introduce friction, slow down the recursion. Chaos theory teaches us that fast feedback loops in nonlinear systems often lead to rapid bifurcation. Slowing them down gives us time to intervene.

4. Build Systems That Recognize Bifurcation Points

Most critically: we need AI systems designed to detect when they’re approaching bifurcation points—moments where a small input could cascade into a new attractor state. This might sound paradoxical (use AI to regulate AI?), but it’s not. It’s systems-level thinking.

Imagine a meta-layer of AI that watches other AI systems for signs of cascading instability, feedback loops that are accelerating, or pathways into new attractors. Not to control, but to flag, alert, and allow human intervention. This would be a chaos-aware approach: not trying to eliminate unpredictability, but managing it intelligently.

The Hope: New Attractors for Human Flourishing

Here’s what I find most compelling about viewing AI through chaos theory: it reframes the entire problem.

We tend to think of AI as either savior or threat—either it solves our problems or it destroys us. But chaos theory suggests a different frame: AI is creating new strange attractors in the landscape of human culture and knowledge. The question isn’t whether these attractors will stabilize (they will), but toward which basins we can nudge them.

Imagine if we deliberately built AI systems that gravitate toward:

- Intellectual humility (acknowledging uncertainty)

- Perspective-taking (holding multiple contradictory truths)

- Fractal scaling (solutions that work at personal, local, and systemic levels)

- Generative abundance (creating more possibilities, not reducing them)

These would be strange attractors toward human flourishing, not fragmentation.

The sci-fi precedent here might be Foundation by Isaac Asimov. In that series, the mathematician Hari Seldon doesn’t control the future directly—he creates conditions such that small perturbations toward his desired state will cascade across centuries. He’s hacking the strange attractor of civilization itself. That’s what we need with AI: not control, but strategic placement of nudges at points of high sensitivity.

The Practical Takeaway: You’re Already at the Bifurcation Point

Let me be direct: we’re living through the bifurcation. Right now, in 2026, AI systems are solidifying into attractors that will shape the next decade. The choices being made by researchers, engineers, and corporations about how to train and deploy these systems are small initial conditions in a chaotic landscape. But they’re near critical points—near bifurcation thresholds.

This means:

- For Technologists: The architecture decisions you make now are embedding strange attractors into systems that billions will interact with. Design deliberately toward the attractors you want to see.

- For Users: Understand that you’re not just consuming AI outputs—you’re feeding the feedback loops that shape future systems. Your training data is your voice. Demand transparency. Question outputs. Inject friction into systems that feel too smooth, too inevitable.

- For Policymakers: Regulation at bifurcation points is exponentially more effective than regulation after the system has stabilized into a new attractor. We’re at that point now. Small regulatory choices will cascade into massive effects.

- For Everyone Else: The strange attractor of AI-shaped reality is not inevitable. Your conversations, your choices, your refusal to spiral into basins that don’t serve you—these are perturbations in a chaotic system. They matter. Especially now.

Conclusion: The Future is Still Chaotic, But Shapeable

Chaos theory offers us no guarantees. The butterfly effect means unintended consequences are inevitable. AI systems will surprise us. Strange attractors will stabilize in ways we didn’t predict.

But chaos theory also offers us something precious: the knowledge that we’re not powerless. Systems are most sensitive to initial conditions near bifurcation points. We’re near one now. Small actions—thoughtful training choices, transparency demands, deliberate community-building around human-centered AI—can cascade into entirely new basins of order.

The disinformation era taught us that chaos can be weaponized. The AI era is teaching us something different: that chaos can be shaped. Not controlled, not eliminated, but nudged toward strange attractors that honor human agency, dignity, and the possibility of a shared reality.

The choice of which attractor we spiral toward—that’s still ours to make.

At least, for now. The butterfly is still in the air.

Leave a Reply